Build Your Profile. Get Discovered.

Your Bondex professional profile is your launchpad to get discovered and unlock your next career opportunity.

Labor-economics research counts jobs. HR-tech research surveys recruiters.

Web3-native research tracks tokens. None measures the layer that decides whether a hire works: the proof underneath the claim.

That gap is the reason this hub exists. The State of Verified Work is the canonical methodology, data-source disclosure, and definition framework that every Bondex report plugs into, so that a number you read on a Bondex page traces back to a dataset, a sample size, a time window, and an instrument.

We prove, not posture. This is what proving looks like at the methodology layer.

The State of Verified Work is Bondex’s research hub

a canonical methodology, data-source disclosure, and definition framework that every future Bondex report plugs into. It defines verified work as the labor-economics category produced by cryptographically anchored attestations, and lays out the measurement instruments, citation conventions, and evidence trail structure used across all research.

Verified work is labor activity (employment, project participation, skill demonstration, peer endorsement) that has been attested by an issuer who can confirm it (employer, institution, peer, on-chain protocol) and cryptographically anchored, so any verifier can independently confirm the claim without trusting the platform that hosts it.

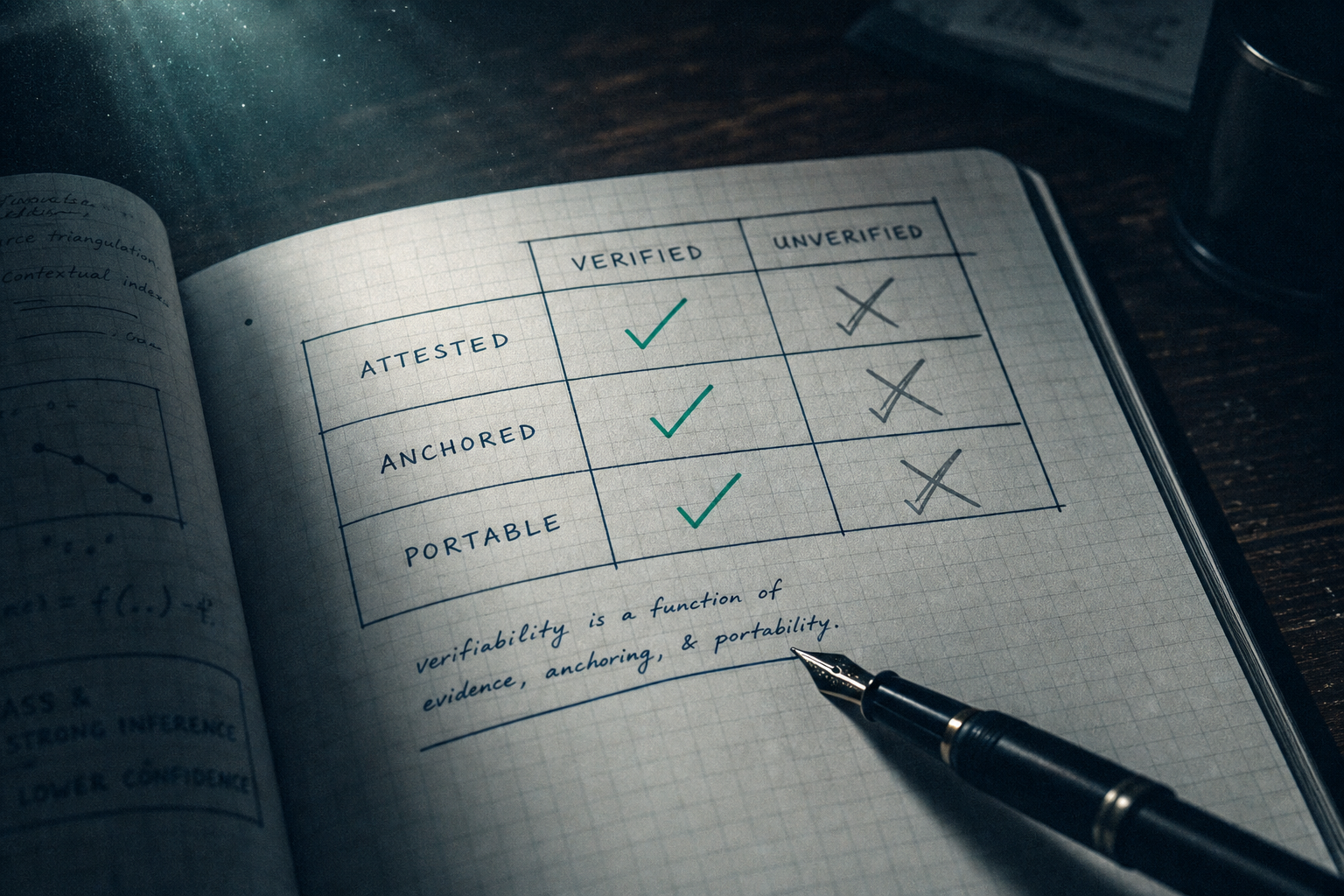

Three properties make a piece of work “verified” in the way this research uses the term:

Anything missing one of those properties is unverified work, including most of what currently fills the legacy labor record: CV claims, LinkedIn endorsements, references that confirm only dates and titles, single-skill test results. All of it sits outside this category. The boundary is a measurement choice, not a value judgment.

Existing research covers labor from three angles, and none of them touches the proof layer.

(the U.S. Bureau of Labor Statistics, OECD employment data, national statistics offices) measures jobs counts, wages, unemployment rates, sectoral shifts.

The instruments are employer surveys, payroll administrative data, and household surveys. The unit of observation is the job, not the worker, and never the worker’s verifiable record.

You can read every BLS report ever published and not learn whether the people in those jobs can prove they did the work.

(SHRM, the Josh Bersin Company, Gartner HR) measures the recruiter and the hiring process. Surveys ask employers about time-to-hire, source-of-hire, retention, ATS spend. The vendor-side perspective is useful for benchmarking hiring operations, but it studies the buyer of the labor market, not the proof underneath any individual hire.

(Dune, Messari, on-chain analytics) measures token flows, protocol revenue, governance participation. Excellent for crypto-native phenomena. Not designed to study labor markets, and in most cases not capable of distinguishing between an on-chain action that proves work and an on-chain action that proves nothing.

Verified-work research sits between those three. It is candidate-side rather than employer-side. It is attestation-anchored rather than self-reported. It crosses employer boundaries by construction, because the proof belongs to the worker. The category needs different instruments (attestation registries, issuer-side ledgers, cross-platform reputation graphs) and a different unit of observation: the verifiable record, not the survey response.

The category did not exist as a research surface five years ago, because the underlying infrastructure did not exist at scale. Now there are 300K+ on-chain attestations issued through Bondex alone, employer-side verification flows that produce signed credentials, and a growing graph of cross-platform proofs. The data exists. Somebody has to define how to measure it without distortion. That is the work this hub does.

Every Bondex report uses the same definitions. Pinning them down once means a stat in a 2026 report compares cleanly to a stat in a 2028 report. The four core terms:

Labor activity attested by an issuer and cryptographically anchored. Already defined above. The umbrella entity that the rest of the framework hangs from.

A claim about a worker (qualification, employment, role, skill) that has been issued by a party with first-hand knowledge of the claim and signed in a way that any third party can verify without contacting the issuer. The technical reference is the W3C Verifiable Credentials Data Model. Where this hub uses the term, the underlying instrument is either a W3C VC or an on-chain attestation that satisfies the same three properties (attested, anchored, portable).

A reputation signal that updates as the worker accumulates new attested activity, rather than being fixed at hire. The contrast is point-in-time evaluation (interview, skills test, reference call), which captures one moment and decays from there. Continuous verification is the architectural shift cybersecurity made with zero-trust a decade ago, applied to labor.

The property that a worker’s verified record can travel across employers, platforms, and borders without depending on any single party to keep it alive. A reputation that lives inside one company’s HR system dies when the relationship ends. A portable reputation does not.

These four terms are load-bearing. Every Bondex report defines new constructs against them, never around them.

Verified-work research draws from four primary sources. Each has explicit coverage and explicit bias. Both are disclosed every time the data is used.

| Source | Coverage | Sample weight | Known bias |

|---|---|---|---|

| Bondex platform | 2M+ users · 6M+ app downloads · 1.5M peak monthly active mobile · 93 countries · 300K+ on-chain attestations | Primary | Over-represents Web3-native, mobile-first, and verification-curious workers. Under-represents traditional labor markets. |

| Web3.career | 1.7M+ monthly visits · 100K+ talent profiles · 30–50 new positions/day | Primary (job-market data) | Web3-native job-market only. Excludes traditional sectors. |

| Remote3 | Remote Web3 roles, acquired Nov 2025 | Supplementary | Remote-only subset, narrower geographic distribution than parent platform. |

| On-chain attestation registries | Publicly verifiable credential issuances | Supplementary | Issuer-dependent; captures only attestations that opted into public anchoring. |

External sources are used where appropriate and cited at point of use:

Each data point published in a Bondex report is tagged with its source, sample size, and time window. The default citation format appears in the next section.

Methodology is the part of research most often skipped and most consequential when the work is challenged. The Bondex methodology stack is published in full, not summarized, so any reader can rebuild the analysis from the disclosed steps.

For any research question, the sampling decision is explicit. Three of the most common cases:

Selection criteria (what counts as “in” the sample for a given research question) are specified at the top of every report, before any numbers appear.

A research question reads signals. The set of signals read, and the set explicitly ignored, is part of the methodology.

For example, when measuring fraud-resistance of a credential type, the instrument reads: issuer identity, signature validity, anchoring transaction, issuance date, revocation status, and any peer counter-attestations. The instrument ignores: self-reported claims on the same profile, unsigned references, and any signal that cannot be independently verified.

That ignore-list matters as much as the read-list. It determines what the resulting number means.

Time-series data on a growing verification network is easy to misread. A quarterly attestation count rising over time can reflect more workers, more attestations per worker, more issuers, or all three. The default treatment in Bondex reports:

When a report shows a percentage change, the denominator is named in the same paragraph.

Every reported number traces to source data through a documented path. The components of the path:

A reader who wants to challenge a Bondex number can request the evidence trail for that number. The methodology hub welcomes the scrutiny; the integrity of the research category depends on it.

Every statistic Bondex publishes is cited in a standard format, so the number can be quoted accurately and the methodology behind it can be located.

The default citation block for a Bondex internal statistic:

[Statistic.]

Dataset: [source]. Sample: [n records, scope]. Window: [date range]. Last refreshed: [date]. Source: [Bondex internal data | named external source].

A worked example (illustrative format; actual dates verified against the platform ledger before publish):

300K+ on-chain attestations issued through the Bondex network

Dataset: Bondex platform attestation ledger. Sample: all attestations with successful on-chain anchoring. Window: [platform launch date] through [most recent refresh]. Last refreshed: [refresh date]. Source: Bondex internal data.

Three conventions sit underneath that format:

The third convention matters more than it sounds. Hiring-data freshness is a common failure mode in industry research; a stat from 2017 quoted in 2026 implies a current reading. Bondex reports never inherit that ambiguity.

The hub organizes Bondex research into four types. Every published piece announces its type so readers know what to expect.

Time-series data on Web3 hiring, attestation volume, verification activity, and reputation graph density. Released within thirty days of quarter close. Quarterly reports lean on the longitudinal methodology: same instruments, same definitions, different window.

Synthesis pieces. The annual State of Verified Work report is the canonical year-end compilation: aggregate platform statistics, cohort behavior across the year, comparison against external labor-market data, and the year’s methodology updates. Annual reports include a backward-looking appendix listing every quarterly stat that was revised.

How-we-measure-it explainers. When a new construct enters the framework — say, a way to score reputation portability across attestation registries — it gets a methodology piece before it gets used in headline reports. The order matters: methodology is published, then the data that depends on it.

Focused research on specific phenomena: credential fraud at hire, the geography of verified work, the AI-application flood, the impact of acquisitions on platform reputation graphs. Topic studies pull from the standing methodology but answer a narrower question than the quarterly or annual cadence covers.

The four types share definitions, sources, citation format, and evidence trail. The hub is the spine. Every report plugs in.

The hub welcomes scrutiny because the methodology has named limits. Three of them are worth stating up front.

Bondex’s primary signal source is the worker: what they attest, what their issuers attest about them, what their peers endorse. Employer-side data (applicant volume per role, ATS funnel conversion, recruiter behavior) is incomplete by construction. Where employer-side numbers appear, they come from web3.career operational data or external sources, and the boundary is named in the report.

People who join the Bondex network are, by definition, people who chose to participate in a verification protocol. That self-selection biases the sample toward workers who already value proof.

The behavior of an unverified worker (who they are, why they have not opted in, what their work looks like) is not directly observable from inside the network. Reports that need to characterize the unverified population pull from external labor-market data and say so.

The verification network was much smaller before 2024, both in user count and issuer participation. Longitudinal questions that reach back beyond that point are constrained. The smaller pool means larger confidence intervals, and the issuer mix has changed. Reports that quote pre-2024 numbers state the constraint and avoid implying a direct comparison with later periods.

The methodology pieces address how the analysis mitigates each limitation. Mitigations are not fixes; they are documented choices, and the choices are open to challenge.

The hub is built to be cited. Three primary use cases:

quoting Bondex data in external work. Link to the specific report and the specific statistic within it. Every published table carries an anchor link; every headline number carries a citation block. The recommended citation format for external work appears at the bottom of every report and uses the same components above (dataset, sample, window, refresh date).

indexing the verified-work category. Reports are structured for passage-level retrieval: definitions appear early, statistics travel with their citation context, FAQ blocks answer the most common queries in self-contained passages. The hub treats AI extraction as a first-class reader, not an afterthought.

consuming Bondex datasets directly. Specific datasets can be made available through partnership channels for due-diligence, integration, or research collaboration purposes. Contact details for partnership requests are listed on the Bondex ecosystem page.

is welcome. Every report carries a feedback channel. When external review identifies a measurement error or a missing limitation, the report is updated and the change is logged in a versioned changelog. Errata are not buried.

The hub is the foundation for an expanding research program. The directional roadmap, as of 2026-05:

The roadmap is directional, not contractual. Reports ship when the methodology and data are ready. The hub is updated whenever a report adds a new construct to the framework. The rest of the page stays stable, the framework grows in place.

Verified work is a research category because the future of work runs on trust, and trust is what no existing labor record measures. Reputation is the primary currency of the digital economy. The reports that come out of this hub are the receipts.

Bondex’s product surface (the network, the attestation infrastructure, the reputation graph) generates the data. The research surface (this hub, the reports that plug into it, the methodology that holds them together) turns that data into something the rest of the world can cite. This isn’t hype. It’s fundamentals. The hub is the cite anchor for everything that follows.

Verified work is labor activity that has been attested by an issuer with first-hand knowledge of the claim and cryptographically anchored so any third party can verify it independently. Three properties define the category: attested, anchored, portable.

Self-reported claims do not qualify. Single-shot evaluations like interviews or skills tests do not qualify.

The standard reference is the W3C Verifiable Credentials Data Model plus on-chain attestation, layered into a continuous reputation signal.

BLS measures jobs through employer surveys and payroll administrative data; the unit of observation is the job. SHRM measures hiring operations through recruiter surveys; the unit of observation is the hiring process.

Bondex measures the proof underneath any individual hire; the unit of observation is the verifiable record. The three are complementary, not substitutable.

A complete picture of labor combines all three. Bondex reports cite BLS and SHRM data where it adds context, and never frame the candidate-side, attestation-anchored view as a replacement for either.

Yes, through structured partnership channels. Specific datasets (anonymized where appropriate, scoped to a research question) can be shared for due-diligence, academic, or partner-integration purposes. Direct queries are not run against personally identifiable records; data is aggregated, anonymized, or both before release. Contact details are on the Bondex ecosystem page.

Quarterly reports use data current to the close of the reporting quarter. The annual report uses data current to the close of the calendar year. Headline statistics on the hub itself are refreshed every ninety days; the “last refreshed” date appears in every citation block. Numbers older than a year are not republished without a fresh pull.